Bazaarvoice Contextual Commerce™ for enterprise

Digital customer journeys as unique as your shoppers

Granify is now part of the Bazaarvoice platform

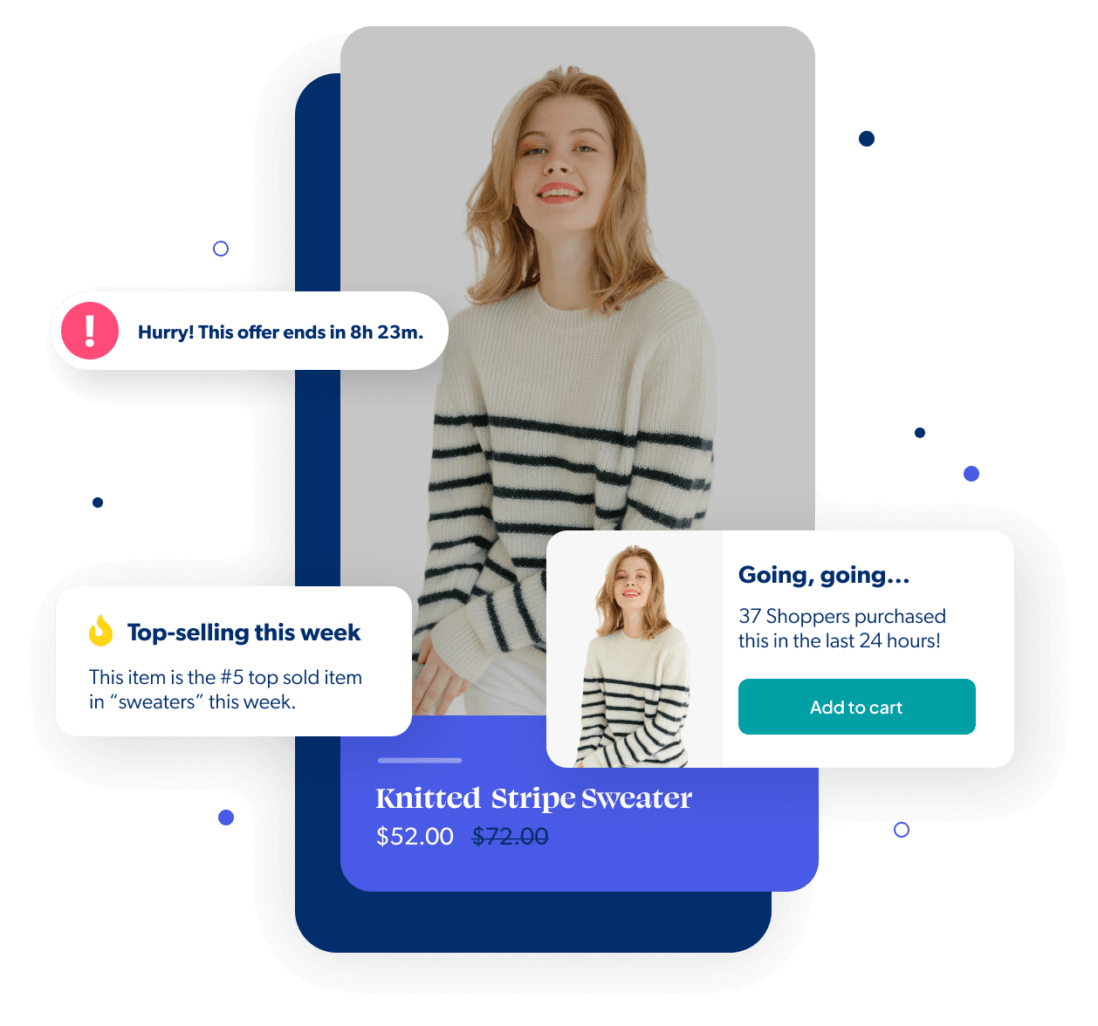

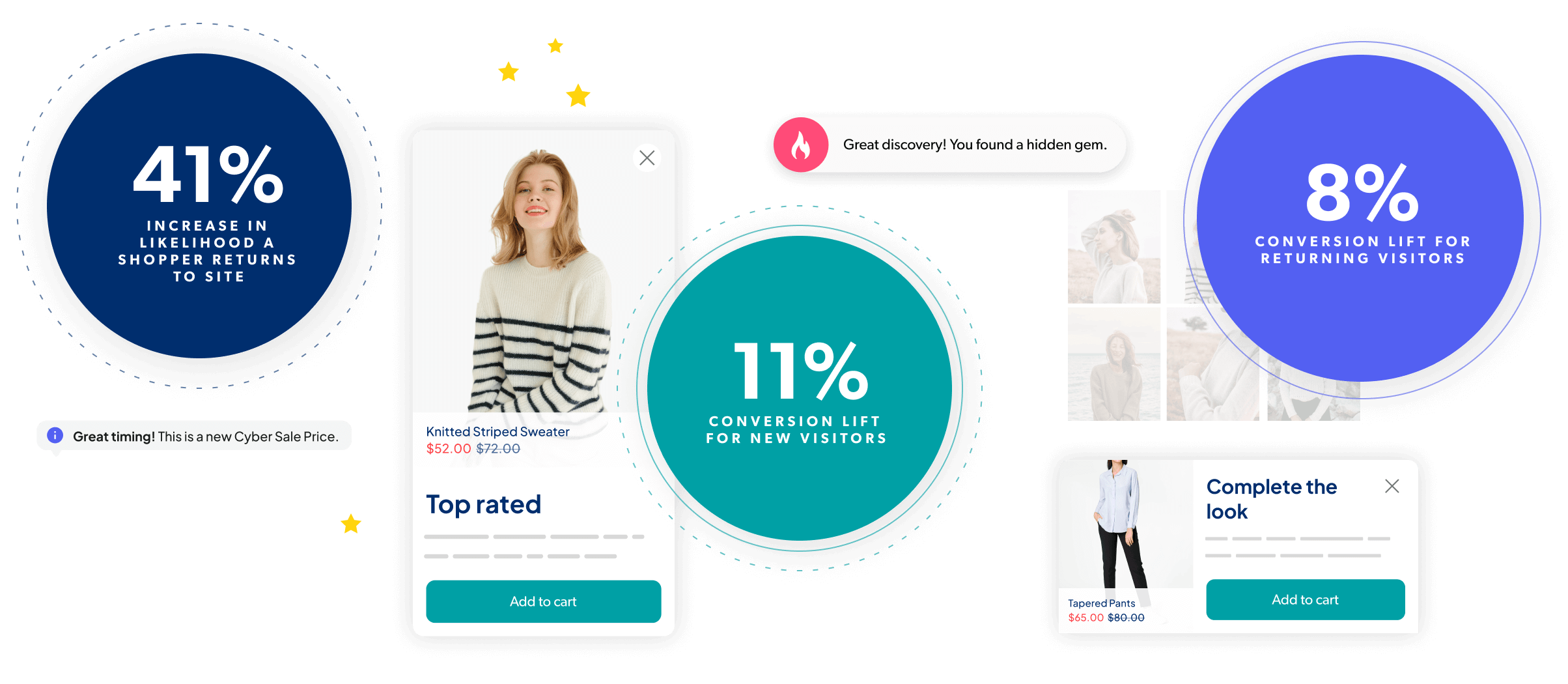

Deliver perfectly timed, intelligently tailored shopping experiences that delight shoppers, leverage social proof, and drive urgency, all while boosting incremental sales from your website traffic.

Trusted by the world’s largest retailers including:

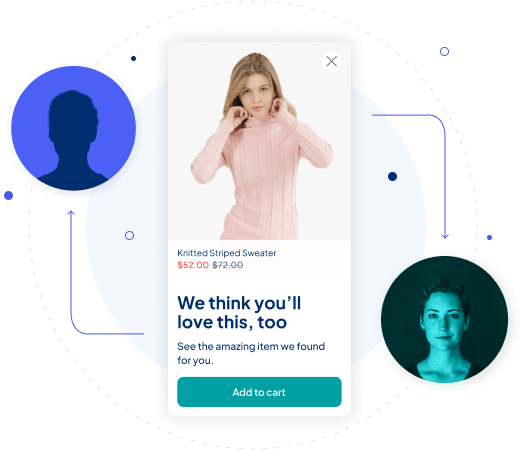

The Bazaarvoice Contextual Commerce brain

Our machine learning technology collects valuable insights into each shopper’s digital body language, compares it against accumulated data sets, and identifies ideal pathways to conversion. It determines when to show messages and equally important: when not to.

ENHANCE EVERY MOMENT

Intelligent tech that drives incremental revenue

With advanced machine learning, you can deliver the highly relevant experiences today’s shoppers crave, at exactly the right moment. It’s tech that improves over time so you’ll see more revenue from it – true incremental revenue – above and beyond the results you’re already seeing today.

drive MORE REACH

Personalized experiences for both known and unknown shoppers

We use shoppers’ real-time behavioral data (not PII) to create dynamic, contextualized shopping experiences. This means you can use Contextual Commerce on its own or combine it with your other personalization offers and, either way, everyone who visits your website gets the best experience (whether new to the site or not).

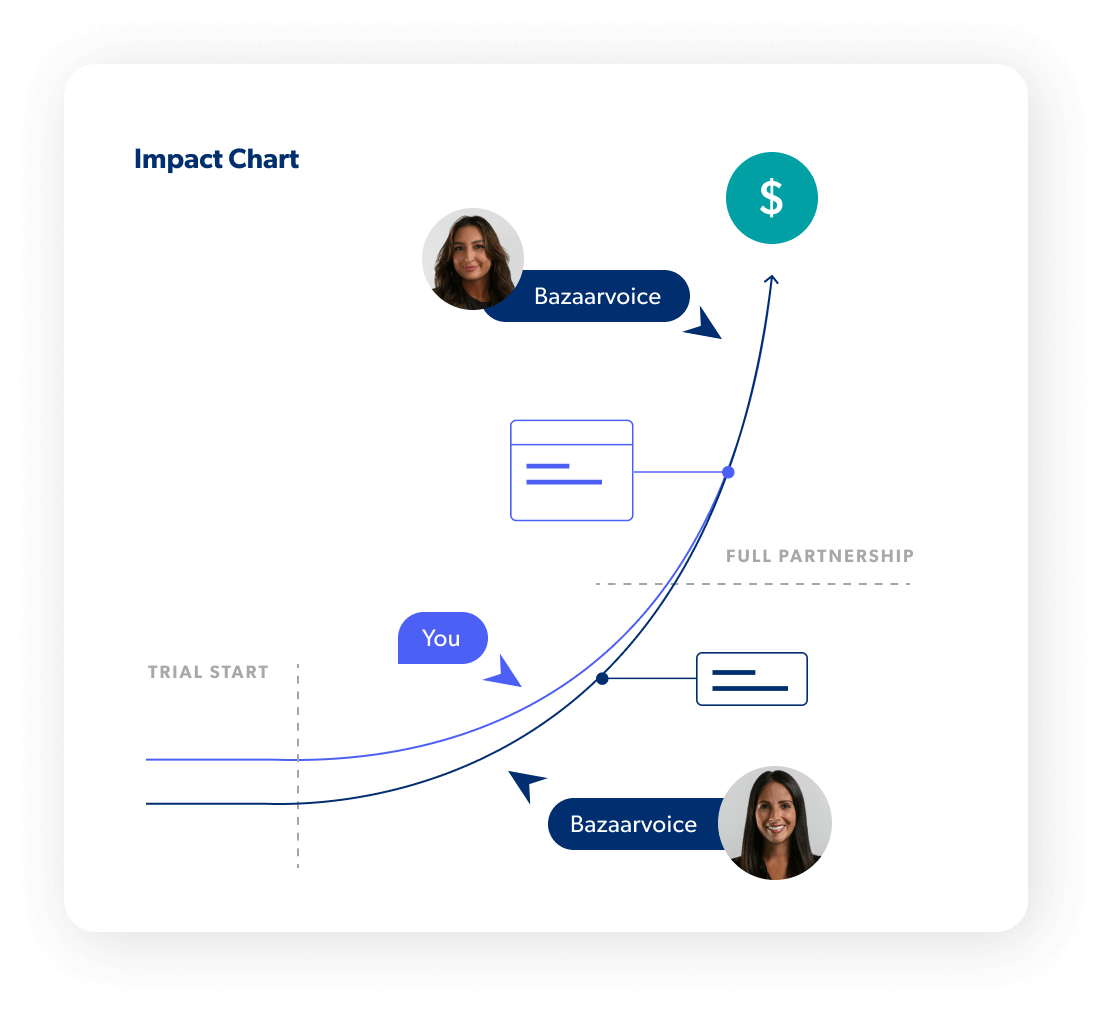

A true partnership

We do all the heavy lifting

White-glove service means we’re bringing our chops to this partnership. You’ll get proactive strategic guidance from dedicated experts to drive the right outcomes, faster implementation, a program that works without requiring you to dedicate resources to it, and ongoing support that molds to your needs (not the other way around).

Only pay for proven impact

We start with a proof of value trial period so you can be confident of the incremental revenue we’ll generate and then we only charge for the incremental revenue you wouldn’t otherwise have without us. And, we make sure you always see results. It’s a no-risk, surefire path to revenue uplift.

FAQs

-

What is contextual commerce?

Contextual commerce is the process of creating dynamic, AI-powered online shopping experiences using website visitors’ real-time actions and behaviors during their current session.

While personalization and contextualization are closely connected, they couldn’t be more different. Unlike personalization, contextual commerce is an automated approach that interprets each shopper’s digital body language to anticipate their needs. This can include everything from the shopper’s current location and device to their individual actions.

Because contextualization continually adapts and tailors the customer experience in real-time, it’s more dynamic and responsive than personalization. The result is relevant information and recommendations that align with each shopper’s immediate needs.

To learn more, check out our e-book on Contextualization and Personalization: the difference between them, implementation methods, and how these two strategies can work together to supercharge your commerce engine.

-

What is digital body language?

Digital body language represents an online shopper’s intent, preferences, and engagement on a website through behaviors such as clicks, scrolls, and search queries.

Digital body language is similar to the physical cues an in-person shopper makes in a brick-and-mortar store (such as searching for a specific item or giving a sales associate the evil side-eye).

For example, when a website visitor exhibits a series of back-to-back clicks in the same spot, this might indicate urgency or a problem with the website’s content. Another example is prolonged inactivity, this could suggest disinterest or that the website is lacking the right information.

By interpreting digital body language, contextualization technology can both understand and anticipate each shopper’s needs and preferences, resulting in a more intuitive and personalized experience.

Express your interest

Contextualization works best for companies with sufficient website traffic. If this sounds like you, check to see if Contextual Commerce is a good fit for your business situation and goals.

Learn more